But tests have to change when your code changes...

...which is often actually an asset.A common argument I hear “against” writing extensive test-driven development, or more broadly, extensive tests, is the idea that writing tests is a waste if your code changes, and renders the tests obsolete.

This week I’m bumping into a case of the opposite.

That is to say, the fact that my tests are breaking during a code change is an asset, rather than a liability.

I’m working on one of my open source projects, and trying to improve a couple aspects of the API. Fortunately, I wrote the existing implementation with TDD, so I do have extensive tests.

This is quite nice, because I can try out an API change, and see what breaks, to get a sense of the full impact of the change.

Yesterday I made a tweak. Then started fixing all the broken tests. About 15 minutes in, I found a surprising impact, that rendered my change untenable. So I reverted my changes, and can start again.

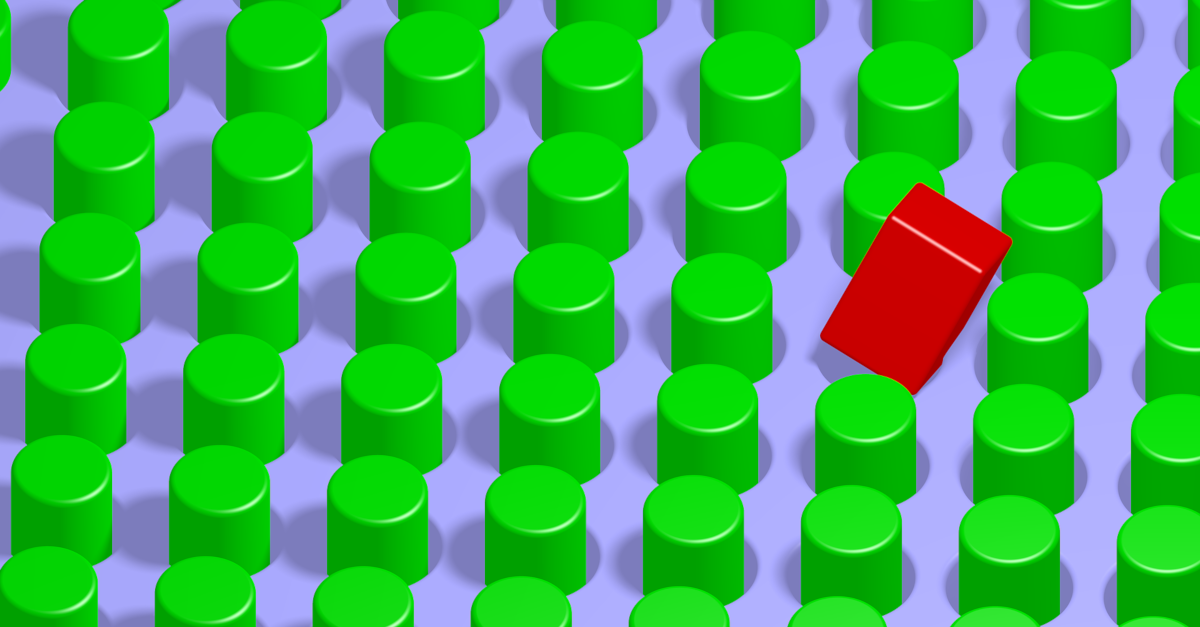

Without those tests, it would have taken much longer, potentially hours, to discover the square hole my round API change trying to fit into.