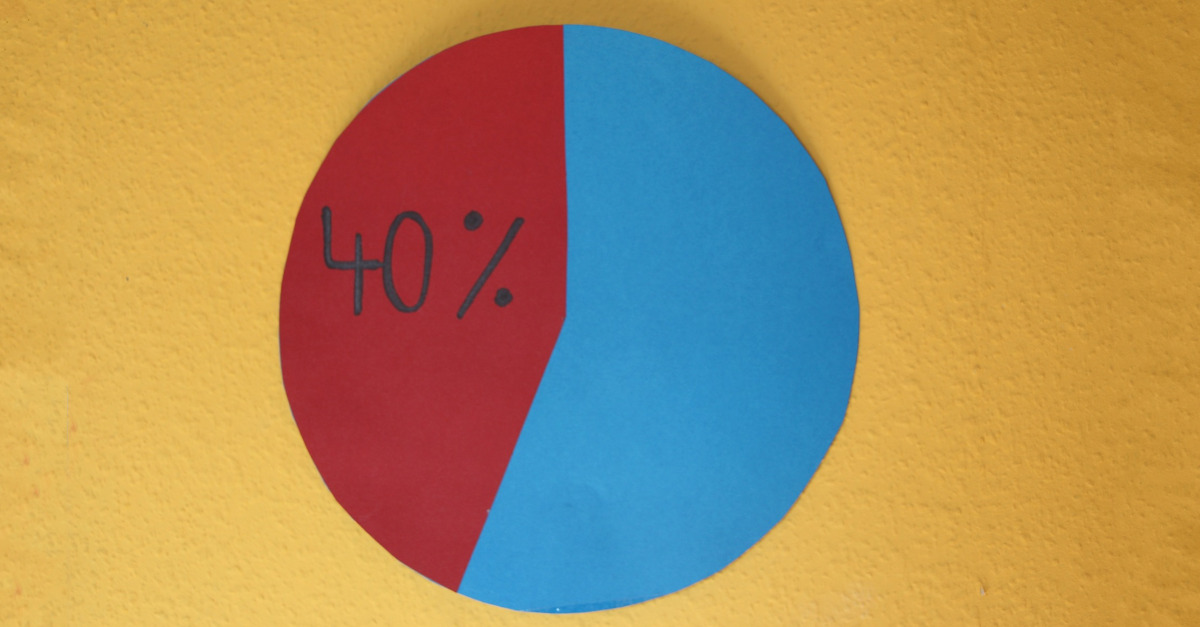

20% of the code is executed 80% of the time

If code offers business value, it's worth ensuring correctness with tests. If it's not worth doing correctly, just delete it.I recently ran upon a piece of advice related to (I think) automated tests:

20% of the code is executed 80% of the time, so that is the code to test.

What do you think about this? Take a moment to form an opinion before you continue reading.

So on the surface, this percentages are probably aproximately true. The 80/20 rule or Pareto principle states that for many outcomes, roughly 80% of the consequences arise from 20% of the cause. I have no reason to think that code execution is any different.

So let’s take for granted that 20% of our code is executed 80% of the time, and the other 80% of our code is only executed 20% of the time.

Does this actually mean it’s safe not to test 80% of our code?

No. Obviously not.

Why does the less-frequently executed 80% of our code exist? If it serves a business purpose, it’s probably worth testing, even if it’s executed less frequently.

Second, why would we ever assume that business value correlates to code execution time?

By that logic, there’s never any reason to test an operating system boot loader, since it only executes once each boot (far less than 20% of the time).

Or imagine your code does currency conversion, and 80% of your transactions are between US Dollars and Euros, does that mean it’s acceptable if the logic to convert Yen to Quetzales is broken? Of course not.

If your code offers business value, it’s worth doing it correctly. If it’s worth doing correctly, it’s worth ensuring correctness with tests. If it’s not worth doing correctly, just delete it.